Touching Musical Instruments

This PhD research project ran from 2013-2018 and was funded by the Engineering and Physical Sciences Research Council (EPSRC) as part of the Doctoral Training Centre in Media and Arts Technology at Queen Mary University of London (EP/G03723X/1). The full thesis document can be found here.

Touch in musical performance

The sense of touch plays a fundamental role in musical performance: alongside hearing, it is the primary sensory modality used when interacting with musical instruments. Learning to play a musical instrument is one of the most developed haptic cultural practices, and within acoustic musical practice at large, the importance of touch and its close relationship to virtuosity and expression is well recognised.

With digital musical instruments (DMIs) – instruments involving a combination of sensors and a digital sound engine – touch-mediated interaction remains the foremost means of control, but the interfaces of such instruments do not yet engage with the full spectrum of sensorimotor capabilities of a performer. This poses compelling questions for digital instrument design: how does the nuance and richness of physical interaction with an instrument manifest itself in the digital domain? Which design parameters are most important for haptic experience, and how do these parameters affect musical performance? Built around three practical studies which utilise DMIs as technology probes, this thesis addresses these questions from the point of view of design, of empirical musicology, and of tangible computing.

Tactile exploration and instrument navigation

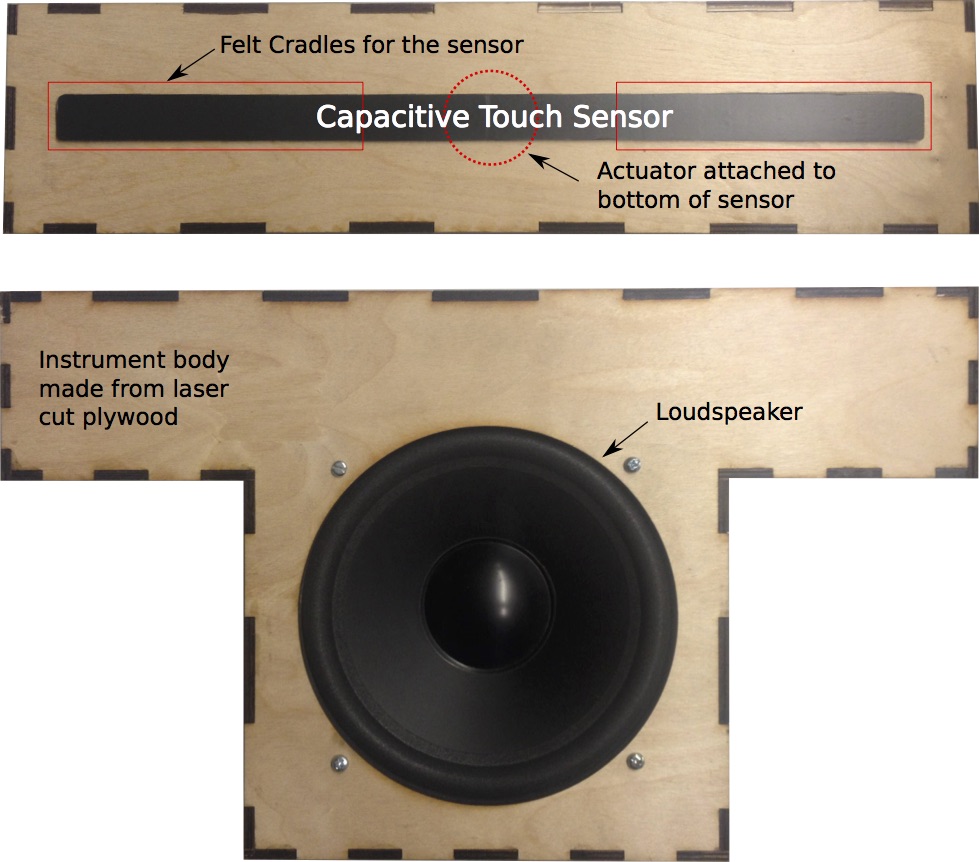

In the first study of this PhD musicians played a DMI with continuous pitch control and vibrotactile feedback in order to understand how dynamic tactile feedback can be implemented and how it influences musician experience and performance. The results suggest that certain vibrotactile feedback conditions can increase musicians’ tuning accuracy, but also disrupt temporal performance.

Auditory-haptic latency and instrument quality

The second study examines the influence of asynchronies between audio and haptic feedback. Two groups of musicians, amateurs and professional percussionists, were tasked with performing on a percus- sive DMI with variable action-sound latency. Differences between the two groups in terms of temporal accuracy and quality judgements illustrate the complex effects of asynchronous multimodal feedback.

Control Intimacy, Richness and Physical Supports

In the third study guitar-derivative DMIs with variable levels of control richness were observed with non-musicians and guitarists. The results from this study help clarify the relationship between tangible design factors, sensorimotor expertise and instrument behaviour.

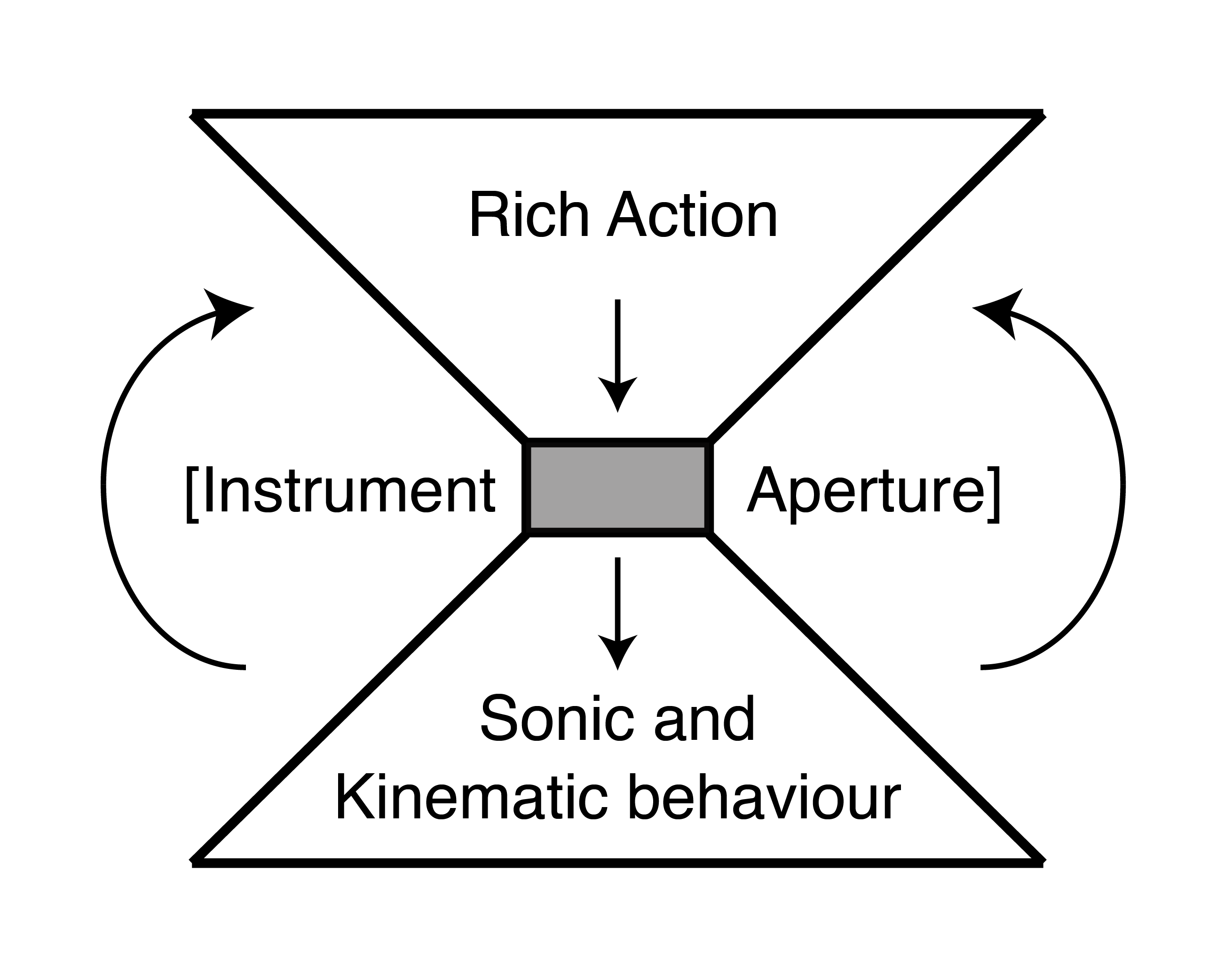

This thesis introduces a descriptive model of performer-instrument interaction, the projection model, which unites the design investigations from each study and provides a series of reflections and suggestions on the role of touch in DMI design.

R. Jack, J.Harrison, F. Morreale and A. McPherson. Democratising DMIs: the relationship of expertise and control intimacy. Proc. New Interfaces for Musical Expression, Blacksburg, Virginia, USA. 2018.(Winner of best paper award)